Why Your AI Transformation Will Fail (And How to Fix It)

Most AI transformations fail because companies treat them as tech projects, not people projects. Here's the talent and culture playbook that separates winners from losers.

TL;DR

AI transformation isn't a technology problem. It's a talent and culture problem. Companies that invest in change management are way more likely to succeed, yet only a third fund it meaningfully. The winners are building smaller, elite teams of AI-proficient employees who can do the work of 10+ traditional hires. Here's what that actually looks like in practice.

What McKinsey's 2025 report actually reveals

I spent last week reading through McKinsey's 2025 State of AI report and the numerous twitter, linkedin, blog posts, and other articles that came out of it. The findings were striking, but not in the way most headlines covered them.

Everyone focused on adoption rates and technology deployment. What caught my attention was the widening performance gap: why some organizations are extracting massive value from AI while most struggle to scale beyond pilot projects.

The answer isn't better models or bigger budgets. It's talent and culture. The companies winning at AI have fundamentally rethought how they hire, train, structure teams, and measure success. Those that haven't are burning money on tools nobody uses effectively.

AI transformation is a people project disguised as a tech upgrade

Here's the pattern McKinsey documented (and I keep seeing firsthand): companies buy Copilot, chatGPT, cursor, claude code... licenses for everyone, announce an AI strategy, maybe hire a Chief AI Officer (like yours truly). Six months later, nothing has fundamentally changed, people are still working the same way, just with expensive tools they barely use.

The problem isn't the technology. As we covered in the Four Elements framework, most people aren't invested enough in company outcomes to justify changing how they work. AI transformation requires rethinking workflows, incentives, and roles, but only a small percentage of organizations invest significantly in the change management, training, and culture work that makes this possible.

The data is stark: companies that do invest in change management are more likely to exceed their AI project expectations. Yet most skip this step entirely, treating AI adoption as a tech rollout rather than an organizational transformation.

The companies winning at AI recognize four critical culture shifts are required:

Trust and data fluency

Your team needs to trust AI outputs and have the data literacy to scrutinize them. High-performing organizations report employees are 3× more likely to trust AI guidance over gut instinct because they've invested in education and transparent validation. This isn't about blind faith in AI. It's about raising the baseline so teams can partner with AI effectively.

We learned this building our support agent Nia. The agent exposed every gap in our documentation. Teams that can't articulate their processes clearly enough for an AI to follow them can't scale with AI. They just create slop.

Agility and experimentation

AI enables rapid iteration, but only if your culture allows it. Organizations that prize "fail fast" experimentation and (actual) cross-functional collaboration see dramatically better results. As one chief digital officer put it: "With AI investments, you need experimentation and learning from failures. It's a big change." If you're not actually failing fast, you're not actually experimenting.

This means shorter planning cycles and empowering small teams to prototype quickly. Some companies are restructuring entirely, embedding developers with subject matter experts so they can "vibe work" and ship in days instead of months. It's also critical for leadership to change the expectation as to how many experiments succeed. If everything succeeds, you're not trying hard enough ;-) . This is easy to write but accepting to kill an initiative and dealing with sunk costs is something most companies struggle with. Yet it is critical to the success of AI transformation.

Change management and training

The soft stuff is the hard stuff. High performers provide extensive training and upskilling to help employees integrate AI into daily work. This isn't about measuring outputs alone. It's about teaching people new ways to think and work.

Organizations that actively manage the human side (training, communication, incentives) are dramatically more likely to achieve desired outcomes. Those that don't breed resistance and watch pilots die in committee.

Leadership vision and modeling

Culture change starts at the top. In companies extracting real value from AI, senior executives actively use AI tools in their own work. They don't just mandate adoption, they model it. This means they fail, they learn, and they do so publicly.

But vision alone isn't enough. Leaders need to answer: What will workflows look like? Will AI make jobs better? How does efficiency translate into growth rather than layoffs? Without clear answers, you'll get compliance at best, sabotage at worst.

More importantly, leaders need to provide clarity, purpose, and direction to the team. As Musk once said, the

"output of any company is the vector sum of the people within it".

The part he misses is that the vectors need to be aligned in the same direction to ensure the sum is maximized. This is why leadership is so important especially in the context of AI transformation where any slight drift gets dramatically amplified.

The death of the "hire more people" strategy

Here's the uncomfortable truth: AI-native companies generate $3.48 million in revenue per employee. Traditional SaaS companies average $610K. Most traditional companies struggle to hit $200K per employee.

That's not a 10% difference. It's a 5-10× productivity gap.

Midjourney generates $200 million in annual revenue with 11 employees. That's roughly $18 million per employee. They don't grow by hiring more people. They grow by amplifying what small teams can do with AI systems.

Each person at Midjourney manages AI systems that handle what hundreds of humans used to do. Customer service, quality assurance, onboarding: all largely automated and operating at scale 24/7.

Why this matters for your workforce strategy

The old playbook was: hire armies of junior staff, oversee them with experienced managers, scale through headcount. That model is dead!

A lean team of AI-proficient talent can outproduce a massive team of less skilled workers by orders of magnitude. As VC Jeffrey Bussgang notes, a single AI-native "10× employee" can accomplish what used to take entire teams. His example: a 100× developer turning a six-month project into a six-day project.

This isn't hyperbole. With AI code generation, testing, and documentation tools, one engineer can handle what previously required a multi-person-month effort. Similar patterns emerge in marketing, sales, operations: anywhere routine work can be automated or AI-assisted.

The implication: you're better off with 10 AI-proficient employees than 100 traditional ones. Quality and AI-literacy now beat quantity. But, and this is the kicker, finding these system-thinkers is hard. Retaining them is even harder because it requires high talent density which by definition is harder to increase for larger organizations than for a small 10-person team.

The organizational restructuring nobody talks about

This creates uncomfortable questions about organizational design:

If one person can manage the output of 10 through AI orchestration, what happens to middle management? If AI agents perform 80% of routine tasks, how do career ladders work? If you can scale revenue 10× without scaling headcount proportionally, how do you think about growth?

These aren't theoretical. Nearly two-thirds of companies are still only piloting AI, struggling to scale it because they haven't rethought their org structure. They're layering AI onto existing processes instead of redesigning how work gets done.

The companies that do restructure report improvements in innovation, customer satisfaction, and talent retention. Mundane work gets automated, people focus on high-impact activities, and productivity compounds. However, in all cases, this transition is hardfelt and often happens with a complete leadership swap.

Building your AI-native workforce: the tactical playbook

Knowing you need AI-proficient talent is one thing. Actually building that capability is another. Here's what works:

Upskill ruthlessly, hire strategically

Start with your existing team. 75% of organizations show no strong preference for external hiring over retraining, recognizing current staff can and should be elevated with AI skills.

Effective programs teach specific, role-appropriate AI skills. Non-technical employees learn data literacy and prompt engineering basics. Technical staff deepen expertise in model development and agent orchestration. This builds a pipeline from within and reduces fears of obsolescence.

But you also need to change how you hire. Leading firms now screen candidates for AI-native traits, not just traditional qualifications. Bussgang's advice: ask candidates to demonstrate how they use AI as a copilot in their work. Those who habitually integrate AI into problem-solving become your high-impact performers.

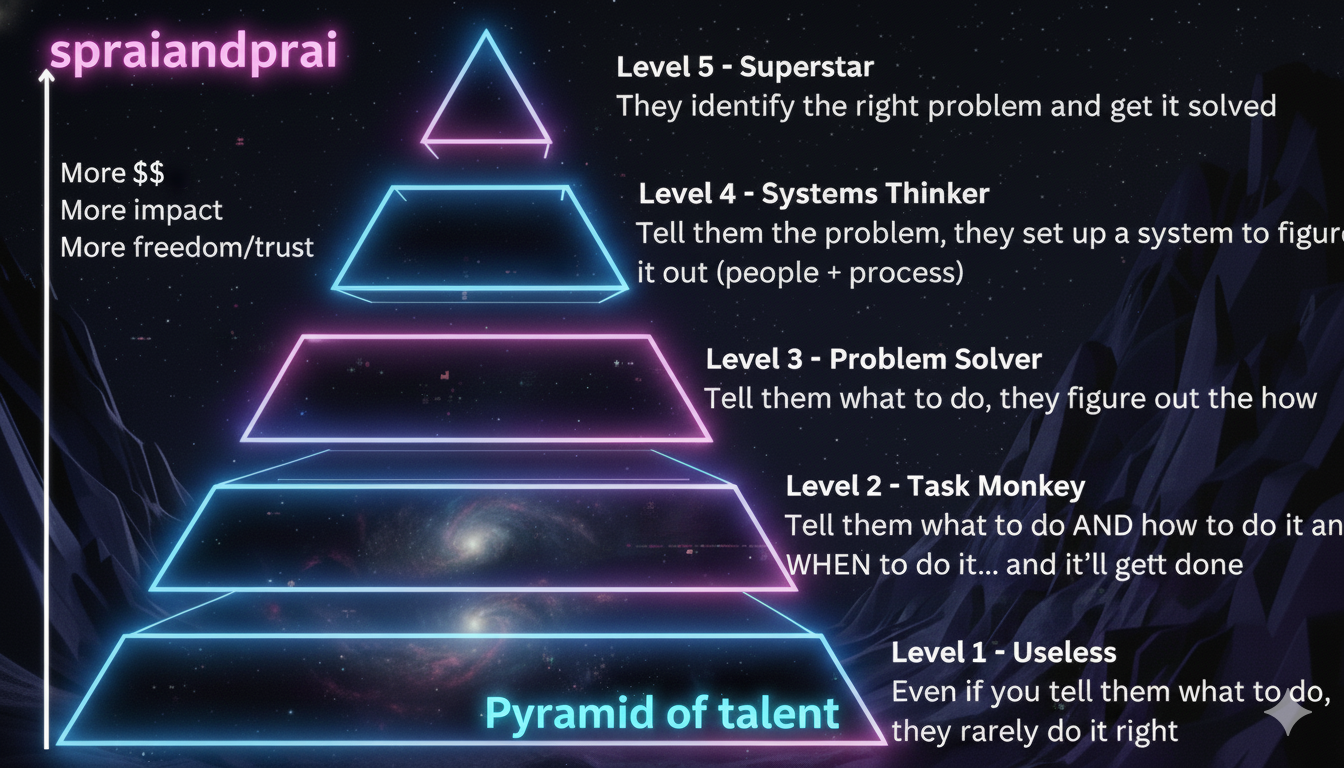

When we rebuilt our engineering org to be AI-native, we didn't just add tools. We changed what we valued: documentation quality, spec clarity, agent-first thinking. Not everyone leveled up. The gap between Level 3 and Level 5 engineers became stark.

Workforce planning for hybrid human-AI teams

High-performing organizations don't let talent needs emerge ad-hoc. They create clear AI talent strategies that anticipate how roles will evolve.

This includes:

- Identifying new roles (AI Ops engineers, Forward deployed engineers, growth marketers...)

- Determining which traditional roles evolve or phase out

- Mapping how to recruit or transition people into these positions (or out)

If AI automates routine analysis, you might reduce headcount there but ramp up data engineering or human-AI interaction design roles. Companies with formal AI talent strategies see significantly higher value than those winging it.

The new skill: AI orchestration

The most valuable employees are becoming AI team leaders. Instead of managing 10 junior staff, a single expert manages 10 AI systems with no juniors needed at all.

As Ethan Mollick argues, AI systems should be managed "as additional team members" rather than impersonal software. The human's job shifts to coordination: knowing how to orchestrate hundreds or thousands of agents working in parallel. This idea that AI software should be accounted for as headcount is actually the main reason why AI labs have the insane valuations they do.

We saw this in our sales retros. Instead of herding people into 90-minute meetings, each rep had a 15-minute AI-guided conversation. The AI handled probing, clarifying, and summarizing. One manager could synthesize insights from the entire team without the coordination overhead.

Measure what matters: outcomes per employee, not headcount

Traditional metrics break in an AI-native world. Stop measuring by headcount or hours worked. Start measuring by outcomes achieved per employee, with AI in the mix.

When our PM started shipping bug fixes independently using agents, it wasn't a demo. It was the new bar. That's the leverage you're building toward: top individuals accomplishing what used to require entire teams.

The competitive moat is culture and capability

AI transformation isn't about deploying better models. It's about building organizations where:

- People are invested enough in outcomes to change their behavior

- Teams are small, elite, and highly AI-proficient

- Workflows are redesigned around AI capabilities, not bolted on afterward

- Leaders model AI adoption and provide clear vision for the future

The gap between AI-native companies and traditional ones is already insurmountable in some sectors. As we detailed in the Four Elements framework, you can't technology your way out of a culture problem.

The winners aren't using AI to produce more outputs faster. They're using AI to improve their thinking, refine their processes, and build deeper capabilities. They treat AI as a team member and catalyst for new ways of working, not just a productivity plugin.

The competitive moat is culture and capability

Start with three questions

In your next leadership meeting, ask:

How invested are our people in company outcomes? If they're not willing to change their workflows for better results, AI transformation will fail. This starts with hiring and gets reinforced through rituals, customer centricity, and compensation.

Which elite teams can we create to show the way? Talent density takes time to build, but you can create high-density sub-orgs that permeate best practices through the organization.

Are we redesigning workflows or just adding AI to existing processes? Half of AI high-performers are using AI to fundamentally transform business processes. Most laggards just layer it on top.

The uncomfortable conclusion

Here's what nobody wants to say out loud: you probably have too many people and AI isn't going to fix that. It will just make it worse.

The future isn't 100 employees doing what 90 used to do. No.

It's 10 AI-proficient employees doing what 100 couldn't do before.

Getting there requires rethinking everything: how you hire, how you structure teams, how you incentivize top talent, how you measure performance, how you design workflows. It requires investing in the "soft" cultural work that most companies skip.

But the alternative is competing with armies of 1× workers against rivals whose every employee functions like a 10× or 100× powerhouse.

The real moat in an AI-native world isn't the technology. It's the culture and talent capability to actually use it. Grappling with this will be the true leadership challenge for years to come.